Bias in the modern era is no longer simply expressed—it is engineered, scaled, and deployed through systems designed to appear neutral.

By InnerKwest Systems Desk | March 27, 2026

A System Rewritten, Not Reformed

Technology was expected to reduce bias.

Instead, it has operationalized it.

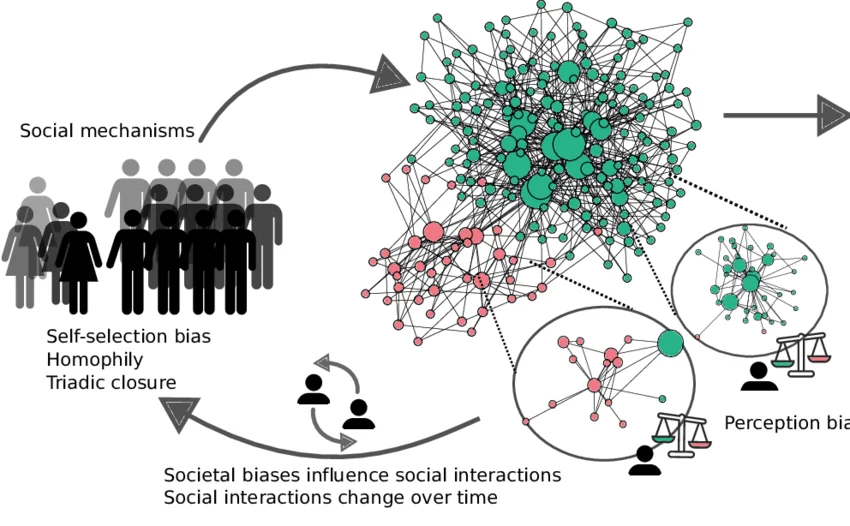

The prevailing assumption was that systems—driven by data, automation, and machine logic—would remove human subjectivity from decision-making. But systems do not emerge in isolation. They are built on historical inputs, trained on existing patterns, and optimized for outcomes defined by prior success.

They do not eliminate bias.

They inherit it.

In the modern era, bias is no longer primarily interpersonal. It is infrastructural—embedded into the mechanisms that determine visibility, funding, and access.

Investor Bias: Pattern Recognition as Gatekeeping

Black founders continue to receive less than 2% of venture capital funding.

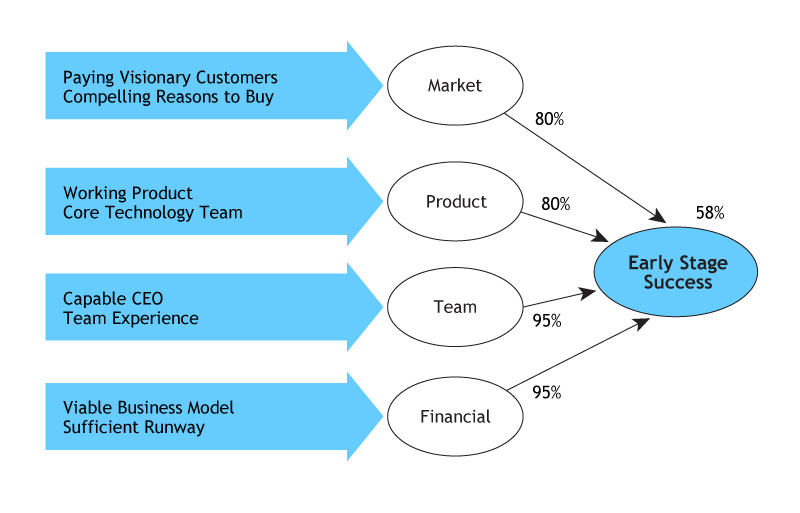

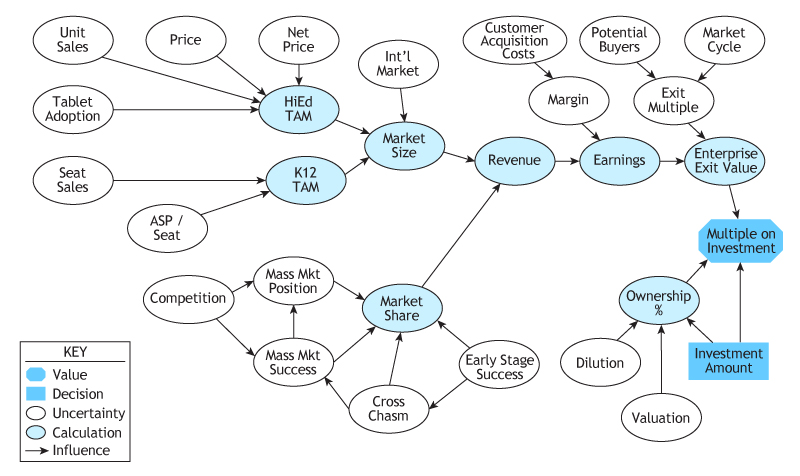

This is often attributed to pipeline limitations or market readiness. The data suggests a different mechanism: pattern recognition.

Venture capital does not operate purely on innovation. It operates on familiarity.

- Founders are evaluated against previous successes

- Networks influence perceived credibility

- Institutional backgrounds signal “fit”

This process—commonly described as “pattern matching”—is not neutral. It reinforces historical outcomes by prioritizing what has already been validated.

The result is a closed feedback loop:

Capital flows toward what resembles past success, limiting what can emerge as future success.

For founders operating outside those established patterns, the burden shifts:

- More traction required before engagement

- Greater proof demanded before belief

- Higher thresholds applied before access

This is not framed as exclusion.

It is framed as risk management.

Platform Governance: Visibility as a Controlled Resource

Digital platforms are often described as democratizing access.

In practice, they regulate it.

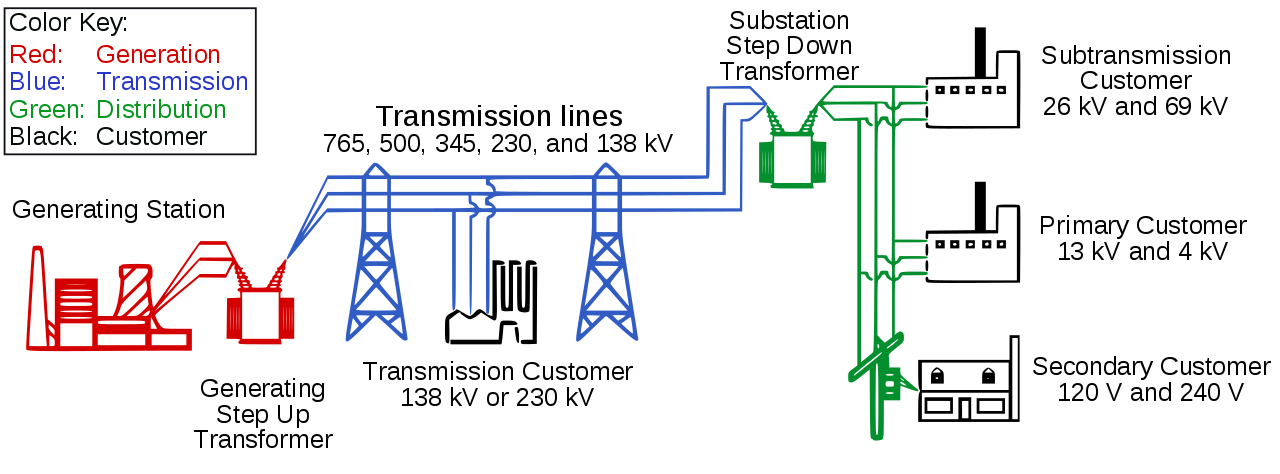

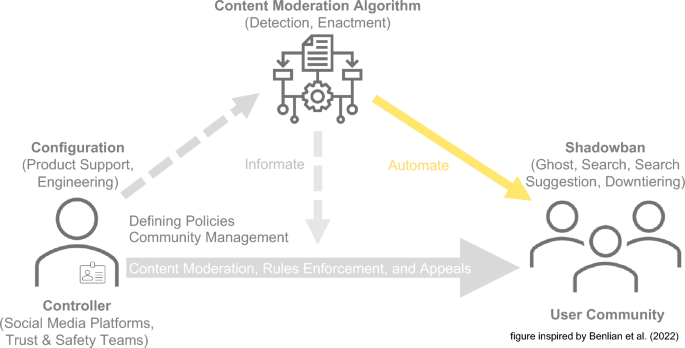

Content distribution is governed by algorithmic systems designed to optimize engagement, reduce risk, and enforce policy at scale. These systems operate through layered moderation—automated filters supported by human review.

The outcome is not always transparent.

On platforms such as Instagram and X (Twitter), creators and projects have reported:

- Inconsistent visibility

- Unexplained reach suppression

- Enforcement actions without clear violations

These outcomes are rarely framed as bias. They are attributed to system behavior, policy enforcement, or algorithmic adjustment.

But the functional impact remains:

Visibility becomes conditional, and distribution becomes uneven.

In digital economies, distribution is not peripheral.

It is leverage.

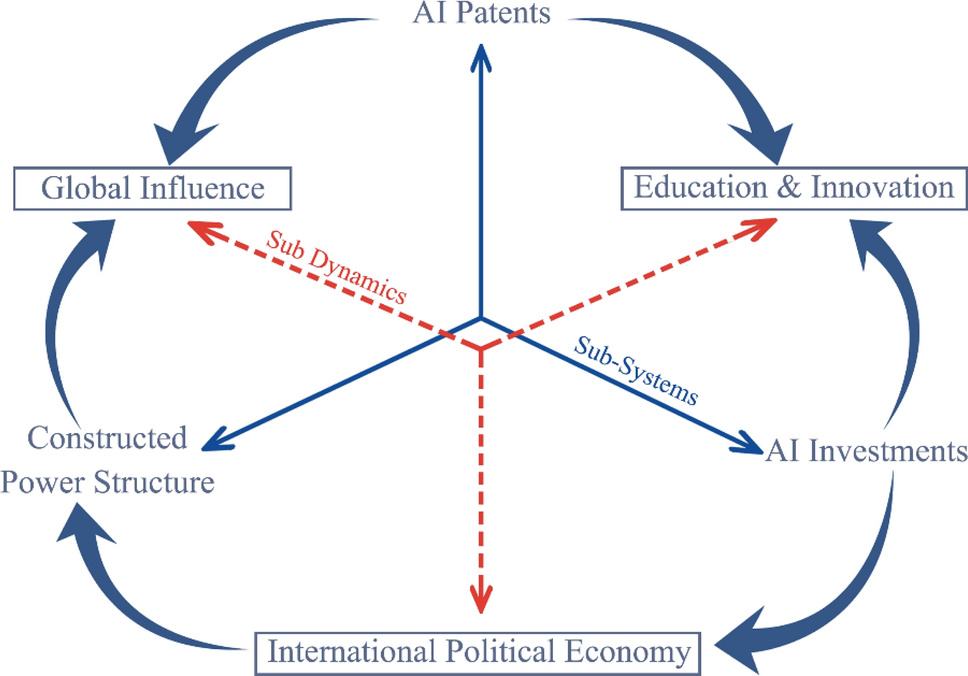

The Decentralization Question

Web3 has been positioned as a structural alternative—an environment where code replaces gatekeepers and access is determined by participation rather than permission.

The reality is more complex.

While decentralization removes certain barriers, it does not eliminate underlying dynamics:

- Early capital still concentrates influence

- Network effects still shape visibility

- Technical access still defines participation

Without intentional design, decentralized systems risk replicating the same structural imbalances they were meant to bypass.

Tools may change. Power distribution often does not.

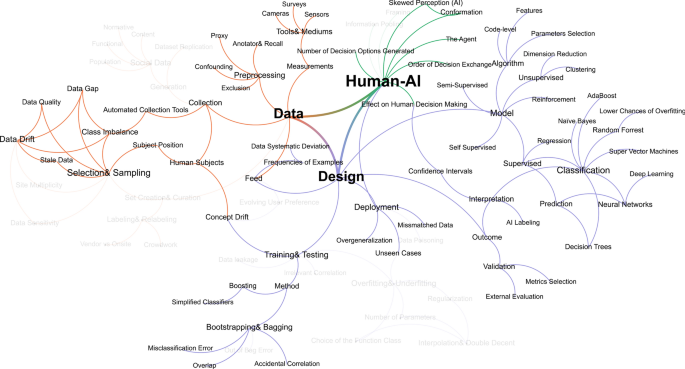

Applied Bias as Infrastructure

What distinguishes modern bias is not its presence, but its execution.

Bias is now:

- Embedded in training data

- Reinforced through optimization models

- Scaled across platforms

- Obscured by system complexity

It is no longer always visible at the point of interaction.

It operates upstream—within the logic of the system itself.

This makes it more difficult to identify, and easier to normalize.

On the Record

There is no immediate correction mechanism for systems operating at this scale.

Nor is there a clear incentive for those benefiting from existing structures to redesign them.

But documentation matters.

Clarity matters.

Because systems that are not examined tend to persist without challenge.

Final Observation: Bias Did Not Disappear—It Was Engineered

Technology was not a departure from existing systems.

It was an extension of them.

The expectation that automation would neutralize bias overlooked a fundamental reality:

Systems reflect the priorities, data, and assumptions that define them.

The question is no longer whether bias exists within modern technology.

It is how deeply it is embedded—and how consistently it is reproduced.

InnerKwest places this on record as observation, not speculation.

Because what is not acknowledged is often accepted.

And what is accepted becomes un-apologetically standard.

At InnerKwest.com, we are committed to delivering impactful journalism, deep insights, and fearless social commentary. Your cryptocurrency contributions help us execute with excellence, ensuring we remain independent and continue to amplify voices that matter.

To help sustain our work and editorial independence, we would appreciate your support of any amount of the tokens listed below. Support independent journalism:

BTC: 3NM7AAdxxaJ7jUhZ2nyfgcheWkrquvCzRm

SOL: HxeMhsyDvdv9dqEoBPpFtR46iVfbjrAicBDDjtEvJp7n

ETH: 0x3ab8bdce82439a73ca808a160ef94623275b5c0a

XRP: rLHzPsX6oXkzU2qL12kHCH8G8cnZv1rBJh TAG – 1068637374

SUI – 0xb21b61330caaa90dedc68b866c48abbf5c61b84644c45beea6a424b54f162d0c

and through our Support Page.

InnerKwest maintains a revelatory and redemptive discipline, relentless in advancing parity across every category of the human experience.

© 2026 InnerKwest®. All Rights Reserved | Haki zote zimehifadhiwa | 版权所有. InnerKwest® is a registered trademark of Inputit™ Platforms Inc. Global. No part of this publication may be reproduced, distributed, or transmitted in any form or by any means without prior written permission. Unauthorized use is strictly prohibited. Thank you for standing with us in pursuit of truth and progress!